Perspective Article

Strategic Decision-Making in the Age of AI: A Conceptual Framework for Managers

- Abstract

- Full text

- Metrics

As Artificial Intelligence (AI) continues to embed itself into the core of business operations, leaders face a growing challenge: making room for machine intelligence without sidelining human judgment, ethics, or long-term strategic thinking. This paper presents a five-pillar framework to help managers integrate AI responsibly and effectively into decision-making processes. The model draws on strategic management theory and is informed by real-world examples from various sectors, including healthcare, finance, logistics, and education. The framework highlights five key areas: data literacy, ethical governance, AI-enhanced intuition, transparency and explainability, and risk assessment. These pillars are not standalone concepts—they work together, offering a practical roadmap for moving beyond scattered or experimental AI efforts toward intentional, organization-wide transformation. Rather than treating AI as a replacement for human insight, the framework emphasizes complementarity, drawing on theories such as bounded rationality, sociotechnical systems, and the Technology Acceptance Model. Through applied examples, the paper demonstrates how companies are addressing real challenges, such as algorithmic bias and decision risk, while navigating the promise and pitfalls of AI. Ultimately, this framework contributes to ongoing conversations about digital transformation by offering a grounded, flexible approach that balances performance goals with ethical responsibility. It also outlines the capabilities leaders will need to ensure AI-enabled strategies are scalable, context-aware, and adaptable to future change

Strategic Decision-Making in the Age of AI: A Conceptual Framework for Managers

Orlando Rivero

Southwest Florida Healthcare Consultants, LLC, USA

Abstract:

As Artificial Intelligence (AI) continues to embed itself into the core of business operations, leaders face a growing challenge: making room for machine intelligence without sidelining human judgment, ethics, or long-term strategic thinking. This paper presents a five-pillar framework to help managers integrate AI responsibly and effectively into decision-making processes. The model draws on strategic management theory and is informed by real-world examples from various sectors, including healthcare, finance, logistics, and education. The framework highlights five key areas: data literacy, ethical governance, AI-enhanced intuition, transparency and explainability, and risk assessment. These pillars are not standalone concepts—they work together, offering a practical roadmap for moving beyond scattered or experimental AI efforts toward intentional, organization-wide transformation. Rather than treating AI as a replacement for human insight, the framework emphasizes complementarity, drawing on theories such as bounded rationality, sociotechnical systems, and the Technology Acceptance Model. Through applied examples, the paper demonstrates how companies are addressing real challenges, such as algorithmic bias and decision risk, while navigating the promise and pitfalls of AI. Ultimately, this framework contributes to ongoing conversations about digital transformation by offering a grounded, flexible approach that balances performance goals with ethical responsibility. It also outlines the capabilities leaders will need to ensure AI-enabled strategies are scalable, context-aware, and adaptable to future change.

Keywords: Artificial Intelligence (AI), Strategic Decision-Making, Data Literacy, Ethical Governance, Human–AI Collaboration, Transparency and Explainability

Introduction

In today’s fast-paced and unpredictable business landscape, artificial intelligence (AI) has emerged as a powerful tool with the potential to reshape how strategic decisions are made. Across industries, managers are encouraged—or in some cases, expected—to adopt AI tools to drive faster, more informed decision-making (Harvard Digital Data Design Institute, 2024). However, despite the excitement, integrating AI into strategic workflows is not as straightforward as it might seem. Questions around ethics, trust, and the evolving role of human judgment complicate the process (Wu et al., 2023). Even as AI tools become increasingly advanced, many organizations continue to struggle with their effective use. Often, no clear roadmap is in place, leading to underuse, overreliance, or even ethical missteps that can erode stakeholder trust (Lu & Lo, 2024). The issue is not just about what AI can do but how managers can thoughtfully bring it into their strategic thinking. This paper proposes a conceptual framework to support managers in doing just that. Drawing on decision theory, technology adoption models, and insights from management research, this work aims to provide a practical, theory-informed guide for navigating both the opportunities and the risks of AI in strategic settings (Csaszar et al., 2024; Venkatesh & Davis, 2000).

Strategic decision-making typically involves making high-stakes choices that influence an organization’s long-term direction. These decisions often come with high uncertainty, significant resource commitments, and long-lasting consequences. Traditionally, they have relied on human insight, judgment, experience, and intuition. However, the rise of AI has added new capabilities to the mix: predictive analytics, real-time data processing, and automated reasoning (Harvard Digital Data Design Institute, 2024).

Used effectively, AI can support a more dynamic and forward-looking strategy. It enables continuous learning from vast data streams, helping managers spot patterns early and adapt to shifting market conditions. Unlike older decision-support systems that look backward, AI offers tools that help organizations move from reactive to proactive strategy development (Wu et al., 2023).

Grounding this framework in robust theoretical foundations involves drawing on several influential perspectives:

Technology Acceptance Model (TAM)

One of the key frameworks used to understand how people adopt new technologies is the Technology Acceptance Model (TAM), initially developed by Davis (1989). TAM explains user adoption through two main ideas: whether a system is useful and easy to use. This model helps shed light on how managers perceive and interact with AI tools in a strategic setting. It is not just about how powerful the technology is—what matters is whether leaders feel that the tools support their goals and fit into their workflow (Venkatesh et al., 2003).

For example, imagine a sales team given a cutting-edge predictive analytics dashboard. If the tool feels clunky, confusing, or difficult to interpret, there is a good chance it will not be used, regardless of how smart the backend is. On the other hand, if the interface is clean, intuitive, and provides real-time insights, adoption rates usually climb, and trust in the system grows. This dynamic was clear in a manufacturing firm that rolled out an AI-powered production forecasting tool. Initially, managers resisted it due to its complexity. However, engagement improved significantly after the interface was simplified and dashboards tailored to specific roles. Line managers began using the tool regularly, resulting in faster planning cycles and improved inventory management (Appinventiv, 2023).

Bounded Rationality

The theory of bounded rationality, introduced by Herbert Simon, reminds us that decision-makers operate with limits on time, attention, and information. No one has perfect knowledge or endless capacity to process data, especially in complex strategic environments. That is where AI can step in. It helps managers deal with overwhelming amounts of information by highlighting trends, surfacing anomalies, and suggesting potential courses of action. However, while AI may reduce some cognitive load, it does not eliminate bias, and in some cases, it can amplify hidden assumptions embedded in the data or model design (Batool et al., 2023; Segars & Grover, 1993).

Consider the example of a supply chain leader utilizing AI to identify inefficiencies across a regional network. The model might improve forecasting overall, but smaller rural areas might get overlooked if the training data is skewed toward larger urban markets. That kind of blind spot can lead to missed opportunities or poor service in critical locations. Similarly, a city transportation department once used AI to optimize bus routes, only to find the system caused traffic jams during rush hour. The issue? The model had not accounted for certain behavioral changes post-pandemic. When planners stepped in to review the outputs alongside contextual knowledge, they were able to rebalance routes more effectively and improve the rider experience (Batool et al., 2023; Segars & Grover, 1993).

Human-AI Collaboration

A growing body of research suggests that the most effective use of AI does not come from replacing human decision-makers, but from combining machine intelligence with human judgment. This idea, often called human-AI collaboration, emphasizes the value of blending analytical power with context, intuition, and ethical reasoning (ResearchGate, 2024). In practice, the best results often happen when people and AI work together, each doing what they do best.

For instance, AI-generated forecasts identify emerging market trends at a global consulting firm. However, instead of relying solely on the data, the leadership team brings those forecasts into strategy sessions, where experts debate, refine, and sometimes challenge the machine’s recommendations. The result is a more grounded and thoughtful strategy combining real-world experience and computational insight. A pharmaceutical company followed a similar path, utilizing AI to map potential research and development investments. Researchers did not just accept the system’s rankings. They applied their own regulatory knowledge and project timelines to fine-tune the options. The emerging strategy outperformed previous planning efforts because it was data-informed and judgment-driven.

These examples show that AI works best not in isolation, but when it becomes part of a larger collaborative process. It is not about man versus machine; it is about partnership.

Complementary Theoretical Perspectives

In addition to these core theories, our framework also incorporates complementary perspectives that broaden its applicability and reinforce the need for systemic alignment when adopting AI in strategic contexts. These additional theoretical lenses enrich our understanding of how AI should be embedded into managerial processes and acknowledge the complex socio-organizational dynamics that shape decision outcomes. These include additional perspectives that capture the broader human, organizational, and environmental factors influencing AI integration.

Considering these views, the framework becomes more flexible and context-aware, especially when implemented in diverse settings.

Sensemaking Theory

This concept explores how individuals interpret complex, ambiguous environments to construct meaning. AI tools can support sensemaking by surfacing patterns and anomalies that might go unnoticed, improving organizational foresight (Maitlis & Christianson, 2014; Weick, 1995). As organizations navigate fast-changing competitive landscapes, sensemaking becomes essential for identifying shifts in customer preferences, stakeholder expectations, or emerging risks. Managers can more effectively construct narratives and align teams around strategic responses by augmenting this process with AI-driven insights. For example, a retail company analyzing sentiment from thousands of online customer reviews may use AI tools to uncover subtle emerging concerns about pricing fairness. This insight prompts leadership to proactively adjust the messaging and pricing strategy before negative perceptions escalate.

Sociotechnical Systems Theory

This approach emphasizes that the success of any technological intervention depends not only on its technical features but also on the surrounding social and organizational systems. Effective AI integration requires alignment with leadership culture, workflows, and team dynamics (Baxter & Sommerville, 2011; Trist & Bamforth, 1951). From a strategic perspective, this theory reminds managers to design AI implementations that fit into broader operational routines, employee capabilities, and governance structures. Ignoring the social context can result in resistance, inefficiencies, or misuse of otherwise sound technological solutions. For instance, a hospital adopting an AI diagnostic assistant might experience pushback from physicians if they feel excluded from its design or if workflows are disrupted. In contrast, when clinicians are involved early in selecting and tailoring the tool, adoption is smoother, and outcomes are improved.

Conceptual Methodology

This paper employs a conceptual approach to theory building, integrating insights from established literature in decision theory, technology adoption, and AI-human collaboration. The proposed framework was developed through an iterative review of academic sources, practitioner reports, and case-based insights (Moore & Benbasat, 1991).

In the financial sector, regulatory reports and internal audits often highlight ethical concerns around AI-driven credit scoring—insights that inform the emphasis on ethical governance in the model. In healthcare, case studies on diagnostic AI tools have revealed the importance of human oversight, helping shape the pillar of AI-enhanced intuition. Meanwhile, AI-powered demand forecasting models in manufacturing have illustrated the advantages of predictive analytics and the risks of model drift, reinforcing the need for robust risk assessment.

Rather than testing hypotheses, this methodology synthesizes key themes into a practical model for strategic decision-making. The five pillars of the framework emerged from identifying recurring managerial challenges and success factors in AI integration across industries. This process involved mapping patterns across finance, healthcare, and manufacturing sectors, where AI adoption has shown promise and complexity.

This approach is particularly suitable for exploring complex, rapidly evolving phenomena, such as AI, where empirical data may be limited or inconsistent. It allows for combining cross-disciplinary perspectives and creating a holistic view that accommodates technological capabilities and organizational realities (Csaszar et al., 2024).

The methodology aligns with calls in management literature to produce theory that informs practice. It emphasizes explanatory richness and practical utility over empirical generalizability (Mäntymäki et al., 2022). In this way, the conceptual model serves as a foundational framework to guide managerial actions while offering a basis for future empirical validation and refinement.

To operationalize these insights, the following section presents a conceptual framework of five interdependent pillars to guide strategic AI integration. These pillars reflect the recurring themes identified in the literature and correspond to common challenges faced by organizations attempting to deploy AI tools at scale.

The five pillars emerged from a synthesis of recurring themes across multiple industries. The adoption of AI has revealed both transformative potential and systemic complexity, particularly in finance, healthcare, and manufacturing.

AI in Strategic Decision Making

AI is no longer a back-office tool; it is rapidly becoming central to core strategic activities such as market analysis, forecasting, innovation management, and risk evaluation. Managers must move beyond technical implementation and consider how AI aligns with broader organizational priorities and stakeholder expectations. AI-driven strategy requires a shift in mindset from automation to augmentation, where machine intelligence complements rather than replaces human expertise.

Organizations that successfully integrate AI into their strategic processes often employ deliberate frameworks that emphasize learning, accountability, and interdisciplinary collaboration. For example, companies such as IBM and Unilever have established cross-functional teams to oversee AI governance. At the same time, healthcare providers have developed internal certification programs to train managers on interpreting AI-generated clinical insights (Csaszar et al., 2024).

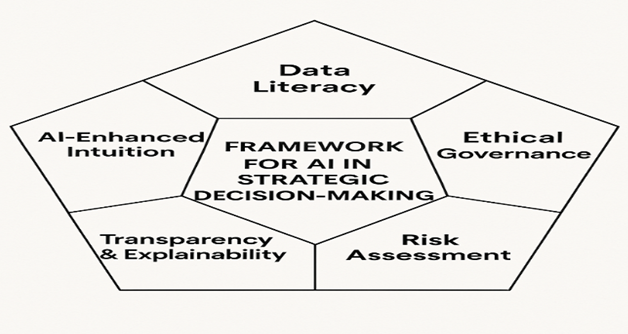

To illustrate this shift toward structured integration, Figure 1 presents the AI-Driven Strategic Decision-Making Framework. This visual outlines five foundational pillars that enable organizations to embed AI strategically, rather than tactically or reactively. Each pillar represents a key managerial or organizational competency that supports the ethical, scalable, and human-centered AI use.

AI-Driven Strategic Decision-Making Framework: Five Foundational Pillars

Proposed Framework

Building on the previously discussed conceptual foundations and strategic imperatives, this section introduces a five-pillar framework for the responsible and strategic integration of Artificial Intelligence (AI) into organizational decision-making. As shown in Figure 1, this framework serves as a diagnostic tool and a practical guide, supporting organizations in embedding AI into core operations to balance technical performance with human values, long-term vision, and ethical accountability.

Unlike traditional models that treat AI implementation as a discrete technological upgrade, this framework views AI integration as a multidimensional organizational transformation. It acknowledges the interplay between managerial competencies, institutional culture, and governance systems, arguing that these dimensions must co-evolve to unlock the full strategic value of AI.

Crucially, the five pillars are interdependent, with synergies and trade-offs. For instance, advances in explainability may lead to better risk assessment, while lapses in ethical governance can undermine stakeholder trust, regardless of data sophistication. Therefore, the framework is best understood as a living system, where ongoing recalibration and alignment are necessary.

Data Literacy

Data literacy refers to the decision-makers’ ability to critically interpret, question, and contextualize quantitative outputs, particularly those generated by AI systems. It is not limited to technical expertise but includes conceptual awareness of how data is sourced, structured, and framed.

Rationale: In an AI-driven environment, poor data literacy at the managerial level can lead to over-reliance on “black box” outputs or flawed models. Decision-makers must recognize statistical anomalies and understand data lineage and potential sources of algorithmic bias.

Examples

At a leading U.S.-based consumer goods company, regional sales managers initially expressed skepticism toward an AI-powered supply chain optimization tool that recommended adjustments to reorder points and distribution schedules. The algorithm, which analyzed real-time sales data, supplier reliability, and seasonal demand fluctuations, was resisted due to perceived complexity and a lack of transparency in how outputs were generated. Many managers, accustomed to relying on historical intuition and manual planning spreadsheets, hesitated to defer to machine-generated recommendations. In response, the company launched a series of targeted training sessions designed to improve data literacy among frontline decision-makers. These sessions introduced managers to key predictive indicators such as lead time variability, stockout probability, inventory turnover ratios, and the logic behind the model’s adaptive learning process. Importantly, the training emphasized how human oversight remained essential, especially when interpreting local market disruptions or supplier anomalies that the model might not capture. Within six months of implementation, the firm observed a 12% reduction in regional stockouts and improved forecast accuracy across multiple product categories—a result consistent with patterns identified in recent research on AI-driven demand forecasting and inventory optimization (Carmatec, 2024; ResearchGate, 2024). In addition to performance improvements, the initiative fostered greater collaboration between supply chain analysts and field managers, encouraging a culture of shared ownership over data-informed decisions.

In the public sector, a U.S. metropolitan transportation authority implemented a capacity-building initiative to enhance AI literacy among senior administrators and planners. As part of their post-pandemic recovery efforts, the agency adopted AI-powered forecasting tools to analyze urban mobility patterns, including transit ridership, congestion points, and shifting commuter flows. While the tools offered real-time data aggregation and predictive capabilities, early models significantly overestimated when commuters would return to public transportation, reflecting assumptions misaligned with evolving hybrid work patterns and behavioral shifts. To address this discrepancy, agency leadership launched a structured training program to enable decision-makers to interpret AI-generated forecasts critically. These efforts included scenario planning workshops, training on behavioral data interpretation, and collaborative review sessions involving planners, data scientists, and policy analysts. Through these activities, administrators developed the skills to interrogate model assumptions, question the representativeness of datasets, and incorporate contextual insights drawn from community feedback, socioeconomic data, and lived experiences. As a result, the agency was able to recalibrate service delivery more accurately, scaling back underutilized routes while redirecting resources to underserved areas with persistent transit needs. Moreover, the initiative strengthened interdepartmental collaboration and promoted a more informed and nuanced application of AI tools in infrastructure planning, reinforcing the principle that algorithmic outputs must be complemented by human judgment and stakeholder insight. These outcomes align with recent public sector research that calls for the greater integration of participatory oversight and human-centered evaluation in AI-assisted planning systems (Harvard Digital Data Design Institute, 2024).

As a result of the initiative, the department successfully recalibrated its transit service schedules based on more evidence-based and context-sensitive recovery trajectories. This allowed for the strategic reallocation of resources to underserved neighborhoods experiencing sustained demand, while simultaneously scaling back service in commercial corridors where foot traffic and ridership remained below pre-pandemic levels. These adjustments improved operational efficiency, enhanced equity in service delivery, and avoided unnecessary expenditure during a period of fiscal constraint. The initiative fostered a culture of informed skepticism and data literacy, promoting cross-disciplinary dialogue among planners, technologists, and community stakeholders. By embedding critical reflection into AI-generated outputs, the agency laid the groundwork for more adaptive, transparent, and socially attuned applications of AI in future urban planning efforts. It demonstrated how capacity-building efforts can extend beyond technical training to reshape institutional mindsets and decision-making norms—an increasingly important outcome as cities embrace data-driven governance (Harvard Digital Data Design Institute, 2024).

At a major U.S. telecommunications provider, the organization launched a peer-learning initiative to strengthen data literacy and decision quality among mid- to senior-level product managers. The program focused on real-world scenarios where AI-powered churn prediction models had generated false positives, erroneously identifying long-term, satisfied customers as high-risk for attrition. These inaccuracies risked triggering costly or misaligned retention campaigns, prompting leadership to explore the root causes of misclassification. Participants were organized into cross-functional teams composed of product managers, data scientists, and customer experience leads. Together, they analyzed the model’s logic, traced data lineage, and examined overlooked contextual factors, such as promotional cycles, seasonal plan upgrades, or temporary usage anomalies due to travel. These sessions provided critical insight into the limitations of predictive algorithms and helped managers develop practical heuristics for balancing AI-driven insights with their market knowledge. The initiative ultimately resulted in refinements to the model’s features and thresholds, the establishment of clear escalation protocols for high-impact decisions, and a broader culture of shared responsibility in AI deployment. By integrating human oversight into the model governance process, the organization not only improved the accuracy of its churn interventions but also demonstrated alignment with emerging best practices in responsible AI adoption, particularly those emphasizing transparency, interpretability, and human-AI collaboration (Wu et al., 2023).

Ethical Governance

Ethical governance entails formalizing principles, policies, and oversight mechanisms that guide the deployment of AI in ways that uphold fairness, inclusivity, accountability, and privacy.

Rationale: AI systems risk amplifying structural biases or violating societal norms without deliberate governance. Ethical lapses can erode customer trust, attract regulatory penalties, and generate reputational damage, intentional or due to negligence.

Examples

A global insurance company launched an internal algorithmic review board composed of ethicists, legal advisors, data scientists, and consumer advocates to evaluate fairness, compliance, and unintended harm in AI model deployment. During an internal audit, the board identified that a pricing algorithm disproportionately penalized zip codes associated with historically marginalized communities due to geographic proxies correlated with systemic inequalities. The company restructured the model to prioritize behavioral risk indicators (e.g., driving patterns, claims frequency) over demographic and location-based variables. This shift improved algorithmic fairness and enhanced public trust and regulatory compliance—a growing expectation in financial services AI governance (Batool et al., 2023).

A leading ride-hailing company adopted a participatory audit process to evaluate the fairness of its driver allocation algorithm. The company invited independent researchers and policy experts to stress-test the system for equity in ride distribution across urban, suburban, and rural geographies. The review uncovered measurable disparities, with rural drivers receiving significantly fewer high-value rides. The platform revised its allocation logic and implemented fairness constraints to reduce geographic discrimination. Additionally, it publicly released its fairness impact assessment, setting a precedent for transparency and opening the door to ongoing external scrutiny—an approach aligned with emerging standards for algorithmic accountability in the mobility sector (Lu & Lo, 2024).

In the education sector, a university piloting an AI-assisted admissions screening system deliberately involved faculty members, student representatives, and admissions officers in the model evaluation and testing phase. The goal was to ensure that the algorithmic model did not inadvertently reinforce biases, particularly those favoring standardized test scores over holistic indicators of student potential. Through a series of participatory review sessions, the evaluators identified that the model disproportionately discounted applicants from under-resourced high schools, whose academic trajectories often demonstrated resilience, leadership, or upward trends not captured by numerical performance alone. The university recalibrated the model’s weighting schema to integrate qualitative and contextual variables, including personal essays, first-generation status, and school-specific performance benchmarks more effectively. This intervention helped preserve the institution’s commitment to access and diversity while illustrating the importance of embedding domain-specific knowledge into AI system design. The case reflects broader concerns in the literature regarding fairness and transparency in AI applications within education, where high-stakes decisions demand heightened ethical scrutiny and stakeholder participation (Maitlis & Christianson, 2014).

AI-Enhanced Intuition

AI-enhanced intuition refers to leaders' ability to integrate algorithmic insights with experience-based judgment, strategic foresight, and contextual awareness. It resists the false binary between “gut feeling” and “data-driven” decision-making, instead emphasizing complementarity.

Rationale: AI models, while powerful, are bounded by the data they have seen and the parameters they are trained on. Human intuition is critical in interpreting edge cases, navigating ambiguity, and anticipating second-order consequences that data alone cannot surface.

Examples

In the pharmaceutical sector, a CEO used AI-generated competitive intelligence to surface potential acquisition targets based on financial performance, market share growth, and R&D pipeline alignment. While the AI tool effectively narrowed the list of candidates through advanced pattern recognition and benchmarking, the final decision-making process involved a more nuanced assessment grounded in the CEO’s personal knowledge of organizational culture, leadership style, and past merger outcomes. Several seemingly optimal candidates were ultimately excluded due to anticipated integration challenges, including incompatible governance structures and divergent innovation philosophies. This case highlights the strategic role of AI-enhanced intuition, where executive experience supplements quantitative recommendations to mitigate post-acquisition risk—a dynamic increasingly emphasized in research on bounded rationality and strategic sensemaking (Csaszar et al., 2024; Weick, 1995).

In international development, a nonprofit director overseeing donor engagement strategy utilized a machine-learning model to predict donation likelihood across multiple regions. While the algorithm provided high-level segmentation based on past giving behavior and digital engagement trends, it failed to capture important cultural variables influencing outreach effectiveness in certain geographies. Drawing on field reports and anecdotal insights from local partners, the director adjusted messaging styles and campaign timing to align with cultural norms, holidays, and linguistic preferences. This context-sensitive refinement led to significantly higher conversion rates in underperforming regions. The case illustrates the limitations of AI when models are trained on decontextualized digital signals and reinforces the importance of human judgment in interpreting algorithmic outputs through a localized, human lens (Maitlis & Christianson, 2014).

In the finance sector, a seasoned investment manager leveraged AI-generated market forecasts to evaluate emerging market opportunities. The system synthesized economic indicators, social sentiment data, and portfolio correlations and recommended entry into several high-yield regions. However, the manager overrode specific recommendations after factoring in geopolitical instability, anticipated regulatory crackdowns, and insider signals not reflected in the data. For instance, one market flagged as “low risk” by the algorithm was in the midst of legislative reforms that would likely affect foreign capital flows—information only accessible through human networks and regional policy tracking. This example exemplifies the concept of AI-enhanced intuition, where strategic foresight and situational awareness are used to temper the mechanistic logic of predictive systems (Wu et al., 2023).

Transparency and Explainability

Transparency refers to the openness with which AI systems disclose their mechanisms, while explainability focuses on the interpretability of specific outputs. They enable users to understand, trust, and contest algorithmic decisions.

Rationale: Especially in high-stakes environments, such as healthcare, criminal justice, or finance, opacity in AI systems can undermine user trust and institutional legitimacy. Ensuring that outputs are explainable to non-technical stakeholders is a precondition for meaningful oversight and shared accountability.

Examples

In the healthcare sector, a public hospital network implemented an AI-driven triage system to assist emergency room (ER) staff prioritize patients based on acuity levels. The algorithm synthesized inputs such as vital signs, presenting symptoms, pre-existing conditions, and recent admissions history to generate risk scores. Recognizing the high-stakes nature of emergency care, the hospital invested in training programs to ensure physicians and nursing staff could interpret how the model reached its conclusions. These sessions covered feature weighting, input transparency, and typical model error scenarios. As a result, clinicians were more confident in incorporating the AI’s recommendations into time-sensitive decisions and were better equipped to explain prioritization to patients and their families, thereby improving clinical communication and public trust. This case aligns with broader findings in AI and healthcare literature that underscore the role of explainability in improving decision-making and fostering trust among human users (Harvard Digital Data Design Institute, 2024).

A leading U.S.-based e-commerce platform integrated explainability layers into its AI-driven recommendation engine, enabling users to see why specific products were being surfaced. Rather than offering opaque suggestions, the interface displayed transparent cues such as “Because you viewed item X” or “Customers who bought Y also liked Z.” This added context increased users’ sense of agency and understanding, particularly during high-choice overload scenarios. The company observed a measurable increase in engagement metrics, including click-through and conversion rates, and a decrease in customer service queries related to irrelevant or confusing recommendations. This example illustrates how even basic levels of explainability can significantly enhance user experience and satisfaction, underscoring the importance of transparency in AI adoption at the consumer level (Wu et al., 2023).

In the criminal justice system, a U.S. state parole board piloted an explainable AI tool to support risk assessments during parole hearings. Aware of the potential ethical and legal implications of opaque decision-making, the board implemented transparency protocols that included the publication of model documentation, variable importance rankings, and performance metrics disaggregated by race, age, and gender. The parole board strengthened internal oversight and enhanced external legitimacy and community trust by committing to a public-facing transparency framework. The approach reflects a growing movement toward algorithmic accountability in high-stakes government applications, where explainability is increasingly treated as a procedural and ethical necessity (Batool et al., 2023; Lu & Lo, 2024).

Risk Assessment

Risk assessment involves proactively identifying, evaluating, and mitigating potential negative outcomes of AI systems, ranging from technical failures to reputational harm or ethical violations.

Rationale: AI systems can fail unexpectedly, especially in dynamic environments. Effective organizations build feedback loops, conduct scenario analyses, and develop contingency protocols to prepare for edge cases, data drift, or model exploitation.

Examples

In the airline industry, a major U.S. carrier implemented synthetic testing environments to evaluate the robustness of its AI-driven dynamic pricing engine. The simulations were designed to replicate extreme operating conditions, including global fuel shortages, regulatory shifts, and pandemic-induced demand collapses. By stress-testing the model outside standard historical parameters, analysts identified unexpected volatility in fare recommendations, particularly in routes sensitive to regional shocks or political instability. These findings prompted the airline to recalibrate several model parameters, incorporate override rules for high-sensitivity routes, and develop contingency protocols to govern human intervention during demand anomalies. This proactive approach exemplifies best practices in AI risk modeling, particularly in sectors where external volatility can amplify algorithmic error and reputational risk (Lu & Lo, 2024).

A fintech startup specializing in digital payments conducted “red team” exercises to expose vulnerabilities in its AI-powered fraud detection system. In these controlled simulations, internal staff were encouraged to adopt the mindset of adversaries—intentionally testing edge cases, mimicking spoofed behaviors, and feeding manipulated input data into the model. The exercises surfaced several weaknesses, including delayed detection of first-time fraud and over-flagging of legitimate international transactions.

Following the exercise, the firm refined its training data, implemented tiered risk thresholds, and introduced a parallel human review layer for flagged anomalies. The process improved the model’s resilience and reinforced a broader organizational culture of adversarial thinking—an increasingly recognized pillar of responsible AI deployment in financial services (Batool et al., 2023).

In the autonomous mobility sector, a vehicle manufacturer partnered with insurance providers, legal consultants, and municipal regulators to simulate worst-case scenarios for AI-driven urban deployment. The simulations included cases of system failure in pedestrian-dense zones, unpredictable weather conditions, and data spoofing attacks on sensor inputs. These scenario planning exercises informed several design and governance decisions, including incorporating redundant sensors, geofencing rules for complex zones, and real-time override capabilities. Furthermore, the collaboration enabled preemptive alignment with regulatory expectations, helping streamline future certifications and public rollout. This example highlights the importance of multi-stakeholder stress-testing in sectors where AI decisions intersect with public safety, liability, and cross-jurisdictional oversight (Harvard Digital Data Design Institute, 2024).

Managerial Blueprint for Responsible and Strategic AI Integration

These five pillars—Data Literacy, Ethical Governance, AI-Enhanced Intuition, Transparency and Explainability, and Risk Assessment—comprise an integrated roadmap for strategic and human-centered AI adoption. As depicted in Figure 1, these pillars do not operate in isolation; rather, they interact dynamically, with each reinforcing and enabling the others to form a cohesive, adaptive, and resilient AI governance architecture. A breakdown in one domain—such as insufficient data literacy or poor model transparency—can cascade into challenges across the entire decision-making ecosystem, undermining technical performance and stakeholder trust. Organizations can move beyond narrow, efficiency-driven implementations toward truly transformational value creation by embedding these principles into strategic planning, operational workflows, and leadership development. This includes enhancing analytical capabilities, improving resource allocation, and shaping a culture of responsibility and critical engagement with intelligent systems. As AI becomes more deeply embedded in core business processes, from customer service and talent acquisition to pricing strategy and innovation management, these pillars provide a robust ethical and managerial scaffolding. Furthermore, the framework encourages organizations to treat AI implementation as a continuous journey rather than a one-time deployment. It supports ongoing learning, cross-functional collaboration, feedback-driven iteration, and ethical reflection, essential competencies in accelerating technological change. While this framework does not eliminate uncertainty, it offers a principled structure to navigate it, enabling leaders to make informed, inclusive, and accountable choices as they shape the future of AI-enabled strategy.

Ultimately, the five pillars support not just the adoption of AI but the cultivation of organizational maturity, where systems, people, and values align to ensure that artificial intelligence serves long-term strategic goals while remaining responsive to the needs of diverse stakeholders and evolving societal expectations.

Managerial Implications

To further illustrate how this framework can be applied in dynamic environments, consider the case of a multinational retail company implementing AI to optimize pricing strategies. The organization utilized the framework to identify gaps in data literacy among product managers, highlighting the need for foundational training in interpreting algorithmic outputs.

Following targeted education efforts, pricing decisions became more data-informed and responsive to market trends, resulting in a measurable uplift in margin.

Similarly, a telecommunications firm used the framework to revise its AI governance policies after discovering that its customer churn prediction model disproportionately flagged customers from specific demographic groups. The firm’s AI ethics review board intervened, updated training data, and established a feedback loop with the marketing and legal departments, aligning AI use with corporate responsibility goals.

To ensure the effective implementation of AI in strategic decision-making, organizations must embrace a mindset of continuous learning and systemic alignment. This means managerial roles must evolve to adopt the right tools and rethink how decisions are made, evaluated, and communicated. Managers are no longer just decision-makers; they are now decision architects, responsible for designing environments where AI complements human insight.

The proposed framework is a practical guide for managers navigating the complexities of AI integration in strategic decision-making. Each of the five pillars represents a distinct area of capability that must be addressed to align AI applications with organizational goals and values.

Application of the Framework

Managers can apply the framework by evaluating their organization’s readiness in the five areas. This process should begin with a candid assessment of the organization’s current AI capabilities and maturity levels. Managers should involve cross-departmental teams, including IT, compliance, HR, and line-of-business leaders, to gain a holistic view of strengths and potential blind spots. Conducting a readiness evaluation also creates space for transparent conversations about the organization’s risk appetite, data governance practices, and leadership alignment on AI objectives. These assessments can uncover bottlenecks, such as misaligned incentives, a lack of digital infrastructure, or insufficient change management protocols, that could hinder AI adoption. This involves introspective review and cross-functional collaboration to uncover blind spots and identify opportunities for strategic alignment. These assessments often reveal technical gaps and deeper organizational and cultural challenges that must be addressed to enable effective AI adoption. Leaders should combine qualitative methods (e.g., stakeholder interviews, leadership workshops) and quantitative tools (e.g., readiness assessments, dashboards, audit checklists) to ensure a comprehensive and actionable understanding of where their organization stands. Ideally, each area should be mapped against maturity stages, from foundational awareness to enterprise-level optimization, allowing organizations to benchmark progress and allocate resources accordingly.

Conducting data literacy audits to assess the analytical fluency of leadership teams: For example, a financial services firm might test executives’ ability to interpret predictive risk scores and trend visualizations generated by AI systems, leading to the design of customized upskilling programs. Similarly, a global retailer piloted a dashboard literacy program for department heads, resulting in quicker identification of product demand anomalies and more efficient promotional planning. To build executive capability, a financial services firm evaluated how well its leadership could interpret AI-generated trend analyses and predictive risk models. The results informed a targeted upskilling curriculum tailored to their strategic roles.

Establishing or reviewing ethical governance policies for AI use: This could include setting up cross-functional AI review committees or ethics boards, as seen in global firms like Microsoft, which assesses algorithmic impacts on fairness, privacy, and accountability. Another approach includes engaging external watchdogs or ethics consultants to audit internal AI projects for bias, transparency, and unintended social impacts. Organizations may follow the example of firms like Microsoft, which have institutionalized AI ethics reviews through dedicated cross-functional committees that evaluate fairness, privacy, and accountability impacts.

Encouraging executive workshops on AI-enhanced intuition and decision augmentation: These sessions can simulate real-world scenarios where AI recommendations are blended with managerial judgment, helping leaders practice balancing data-driven inputs with strategic foresight. For instance, an aerospace company facilitated scenario-based retreats where executives explored AI-generated safety forecasts with past engineering reports, cultivating trust and discernment.

Selecting AI platforms prioritizing transparency and explainability features: A healthcare provider choosing between diagnostic tools may prioritize those with explainable outputs, enabling clinicians to review and validate model logic alongside medical protocols. In the energy sector, a utility company implemented AI load forecasting tools with visual traceability to model logic, allowing planners to defend decisions during regulatory audits. When evaluating AI tools, a healthcare provider may choose systems that offer transparent reasoning paths, allowing medical staff to verify recommendations against established clinical guidelines.

Integrating AI-related risks into enterprise risk management frameworks: A multinational logistics company might incorporate AI-driven forecasting errors into business continuity planning, adjusting thresholds for inventory control or rerouting contingencies in the event of model drift. In another example, a financial institution revised its operational risk model to include algorithmic trading misfires, ensuring safeguards were built into both systems and human override protocols.

Training, Culture, and Tools

To illustrate these concepts in action, consider a global logistics company that launched a data literacy boot camp for mid-level managers. The camp taught them to engage with AI-generated shipment forecasts. As a result, managers were better equipped to identify errors in real-time tracking data and avoid supply chain disruptions.

A pharmaceutical firm facing public scrutiny over algorithmic bias developed a transparent AI ethics policy and invited patient advocacy groups to its advisory board. This improved internal practices and built external stakeholder trust.

At a large tech firm, executive leadership underwent immersive workshops focused on AI-enhanced intuition, pairing predictive tools with business simulations. These experiences helped leaders learn when to override or adapt AI recommendations based on context and judgment.

In a public sector example, a government agency deployed explainable AI dashboards to visualize resource allocation outcomes. The transparency improved employee engagement and boosted adoption rates among frontline managers who were previously hesitant to use algorithm-driven systems.

Similarly, a regional bank included AI risk metrics in its quarterly strategy review, ensuring ongoing attention to algorithmic drift, regulatory changes, and public sentiment.

Training initiatives should include targeted programs for managers beyond technical skills, including ethical reasoning, cognitive bias awareness, and AI-human collaboration best practices. Organizations might partner with academic institutions, professional development firms, or AI vendors to offer certifications or workshops aligned with each pillar.

Culturally, organizations must foster psychological safety, curiosity, and interdisciplinary collaboration. Managers should be encouraged to challenge algorithmic outputs, raise ethical concerns, and contribute to shaping how AI systems are deployed.

On the tooling side, adopting AI systems should be accompanied by explainable interfaces, dashboard visualizations, audit trails, and feedback mechanisms. These tools empower managers to interpret AI outputs in context, engage constructively with data scientists, and retain strategic control over final decisions.

In sum, this framework’s practical value lies in its ability to guide AI adoption and implementation through a deliberate focus on people, processes, and organizational learning.

Future Research

While the proposed conceptual framework provides a structured guide for integrating AI into strategic decision-making, there remains a critical need for empirical validation and contextual exploration. Future research should focus on testing the effectiveness of the five-pillar model in real-world organizational settings. Longitudinal case studies, cross-sectional surveys, and field experiments could evaluate the influence of each pillar on decision-making quality, organizational performance, and stakeholder outcomes.

Empirical studies should also explore potential mediating and moderating variables, such as organizational culture, digital maturity, and leadership style, that may influence the relationship between AI integration and strategic outcomes. This will provide a more nuanced understanding of the conditions under which the framework is most effective.

Cross-cultural and cross-sectoral studies can further enrich the framework by exploring its relevance across geographic regions and industries. For example, does ethical governance take on different dimensions in heavily regulated sectors such as finance or healthcare versus fast-moving consumer goods? How do SMEs adopt or adapt the framework differently from multinational corporations?

The framework could also be applied to developing diagnostic tools or maturity models to assess organizational readiness for an AI-driven strategy. Researchers could create validated instruments to measure the five pillars and track organizational progress.

Finally, new risks and capabilities will emerge as AI technologies evolve, particularly generative AI and autonomous decision-making. Future research should regularly revisit and refine the framework, ensuring it remains responsive to the rapidly changing technological landscape.

Conclusion

Artificial intelligence is reshaping the strategic decision-making landscape, compelling organizations to rethink how they harness data, structure leadership, and evaluate risks. This paper proposes a five-pillar conceptual framework to guide managers in integrating AI into strategy with confidence and responsibility. The study offers a theoretically sound and practically actionable model by grounding the framework in well-established theories and managerial practice.

Data literacy, ethical governance, AI-enhanced intuition, transparency, and risk assessment are essential building blocks for creating AI-ready organizations. These dimensions prepare managers to engage effectively with AI systems and help ensure that decision-making remains human-centered, ethically grounded, and aligned with long-term strategic goals.

As AI technologies continue to evolve, the role of human judgment will become even more critical. Machines will not replace managers; their success will hinge on their ability to integrate AI into strategic thinking in ways that respect context, uncertainty, and organizational values. This requires cultivating a new leadership mindset combining data fluency, ethical foresight, and cross-functional collaboration.

The future of strategic leadership lies not in choosing between human and machine, but in shaping a new synergy. When implemented responsibly, AI can be a powerful amplifier of human judgment, creativity, and vision. This framework serves as a roadmap for navigating that transformation and calls on leaders to shape a future where intelligent systems and human insight work to drive sustainable, inclusive, and innovative strategies.

References

Appinventiv. (2023, October 27). Predictive analytics in manufacturing: Applications, benefits, and challenges. https://appinventiv.com/blog/predictive-analytics-in-manufacturing/

Batool, A., Ameen, N., & Paul, J. (2023). Ethical issues in AI: A systematic literature review and research agenda. Journal of Business Ethics, 182(3), 689–706. https://doi.org/10.1007/s10551-021-04963-6

Baxter, G., & Sommerville, I. (2011). Socio-technical systems: From design methods to systems engineering. Interacting with Computers, 23(1), 4–17. https://doi.org/10.1016/j.intcom.2010.07.003

Carmatec. (2024, October 3). AI for inventory management explained. https://www.carmatec.com/blog/ai-for-inventory-management-explained

Csaszar, F. A., Kleinbaum, A. M., & Balagopal, S. (2024). Navigating AI-enabled strategy: Cognitive limits and organizational design. Strategic Management Journal. Advance online publication. https://doi.org/10.1002/smj.3462

Davis, F. D. (1989). Perceived usefulness, perceived ease of use, and user acceptance of information technology. MIS Quarterly, 13(3), 319–340. https://doi.org/10.2307/249008

Harvard Digital Data Design Institute. (2024). AI and the future of decision-making. Harvard Business School Publishing. https://d3.harvard.edu/the-future-of-decision-making-how-generative-ai-transforms-innovation-evaluation/

Lu, L., & Lo, J. (2024). Strategic AI integration: Frameworks and failures. California Management Review, 66(1), 58–77. https://doi.org/10.1177/00081256231194655

Maitlis, S., & Christianson, M. (2014). Sensemaking in organizations: Taking stock and moving forward. Academy of Management Annals, 8(1), 57–125. https://doi.org/10.5465/19416520.2014.873177

Mäntymäki, M., Baiyere, A., & Islam, A. K. M. N. (2022). Digital transformation research agenda: A multidisciplinary perspective. Journal of Strategic Information Systems, 31(2), 101695. https://doi.org/10.1016/j.jsis.2022.101695

Moore, G. C., & Benbasat, I. (1991). Development of an instrument to measure the perceptions of adopting an information technology innovation. Information Systems Research, 2(3), 192–222. https://doi.org/10.1287/isre.2.3.192

ResearchGate. (2024). Human-AI collaboration and strategic management. https://www.researchgate.net/publication/AI_collaboration_strategy

Segars, A. H., & Grover, V. (1993). Re-examining perceived ease of use and usefulness. MIS Quarterly, 17(4), 517–525. https://doi.org/10.2307/249590

Trist, E. L., & Bamforth, K. W. (1951). Some social and psychological consequences of the Longwall method of coal-getting. Human Relations, 4(1), 3–38. https://doi.org/10.1177/001872675100400101

Venkatesh, V., & Davis, F. D. (2000). A theoretical extension of the technology acceptance model: Four longitudinal field studies. Management Science, 46(2), 186–204. https://doi.org/10.1287/mnsc.46.2.186.11926

Venkatesh, V., Morris, M. G., Davis, G. B., & Davis, F. D. (2003). User acceptance of information technology: Toward a unified view. MIS Quarterly, 27(3), 425–478. https://doi.org/10.2307/30036540

Weick, K. E. (1995). Sensemaking in organizations. Sage.

Wu, X., Lin, C., & Rajagopalan, B. (2023). AI for strategic insights: A review of applications and challenges. MIT Sloan Management Review, 65(2), 44–53. https://sloanreview.mit.edu/article/ai-for-strategic-insights/

Download Count : 180

Visit Count : 765

Keywords

Artificial Intelligence (AI); Strategic Decision-Making; Data Literacy; Ethical Governance; Human–AI Collaboration; Transparency and Explainability

How to cite this article

Rivero, O. (2025). Strategic decision-making in the age of AI: A conceptual framework for managers. European Journal of Studies in Management and Business, 35, 1-17. https://doi.org/10.32038/mbrq.2025.35.01

Acknowledgments

Not applicable.

Funding

Not applicable.

Conflict of Interests

No, there are no conflicting interests.

Open Access

This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. You may view a copy of Creative Commons Attribution 4.0 International License here: http://creativecommons.org/licenses/by/4.0/